Switching gears to an entirely acoustic piece, I recently finished composing a piece for solo flute titled State Change. The program notes read:

Many substances exist in different phases of matter or “states”. When external energy is applied, solids becomes liquids become gases, and upon the cessation of that outside energy a gas becomes a liquid becomes a solid. Notably, during some state transitions properties of the substance change discontinuously, resulting in abrupt changes in the volume or mass of the substance.

This piece explores the sonic analogy of state changes, modifying the parameters of the sound of the flute to transition between different textures (“states”).

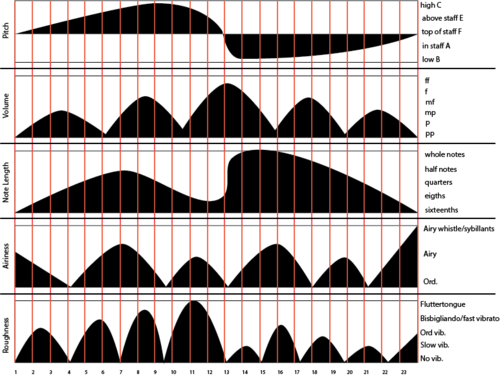

My process for composing this piece was a new one for me. Taking inspiration from advice given to me by Tom Lopez (who mentioned that it was a compositional technique used by Morton Subotnick) I first created a “Parameter Map”, which included parameters such as pitch, volume, and “airiness” mapped over time, with the time dimension striated into 24 discrete segments. The solid black areas correspond to the value over time of a parameter.

State Change Parameter Map

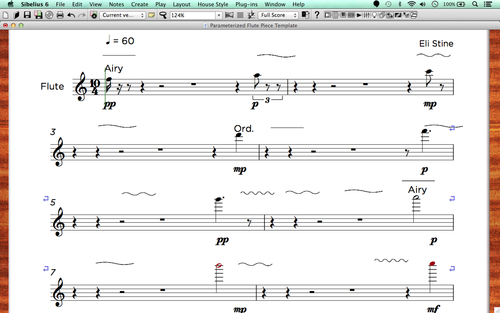

I then took the parameters at the start of each of the 24 segments and represented them as the first note in each of 24 measures in a score with corresponding pitch, note length, volume, etc. (e.g. D4, quarter note, mezzoforte). I call this document a “Notation Template”.

State Change Notation Template

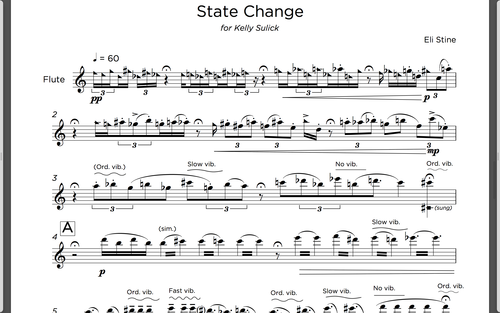

From here, my compositional goal was to “fill in” the measures, making sure that transitions between different parametric values (molto vibrato to no vibrato, for example) were smooth and interesting. This part of the process was the most intriguing to me. It gave me freedom to create interesting gestures while not having to worry about the overall trajectory: at all points of the process I knew the goal of the gestures I was creating and what trajectories spawned them. Put more colloquially, “where I was coming from and where I was going to” was known, because of the notation template and parameter map.

Ultimately, I ended up modifying some of the timings and parametric values, but the process helped me to create a piece and formal structure that I otherwise would not have created. My favorite moments in the piece are those that mirror the “abrupt changes in… volume or mass" alluded to in the program notes, times when the changing parameters interact momentarily to create a new texture for a moment before diverging.

State Change Final Score

I hope to continue exploring this and other experimental compositional techniques in future pieces.